Vespa.ai vs Elasticsearch: Which is Best for Real-Time Vector Search?

A deep dive into Vespa.ai vs Elasticsearch for 2026. Learn why Vespa's native tensor support and AI-driven query conversion win for real-time search intelligence.

Vespa.ai vs. Elasticsearch: Choosing the Right Vector Database for Real-Time Search Intelligence

In the rapidly evolving landscape of 2026, "search" is no longer just about matching keywords. As a Data Growth Engineer (something I am moving towards), I’ve seen firsthand how the shift toward Vector Search and Retrieval-Augmented Generation (RAG) has forced architects to reconsider the foundations of their search stacks.

The big question usually boils down to two heavyweights: Elasticsearch, the battle-tested industry standard, and Vespa.ai, the high-performance "big iron" of search engines.

Having implemented multiple production-scale projects on Vespa, including an AI-driven natural language query interface, I want to break down why the "right" choice depends on whether you are building a library or a brain.

The Architectural Divide

Elasticsearch: The Versatile Generalist

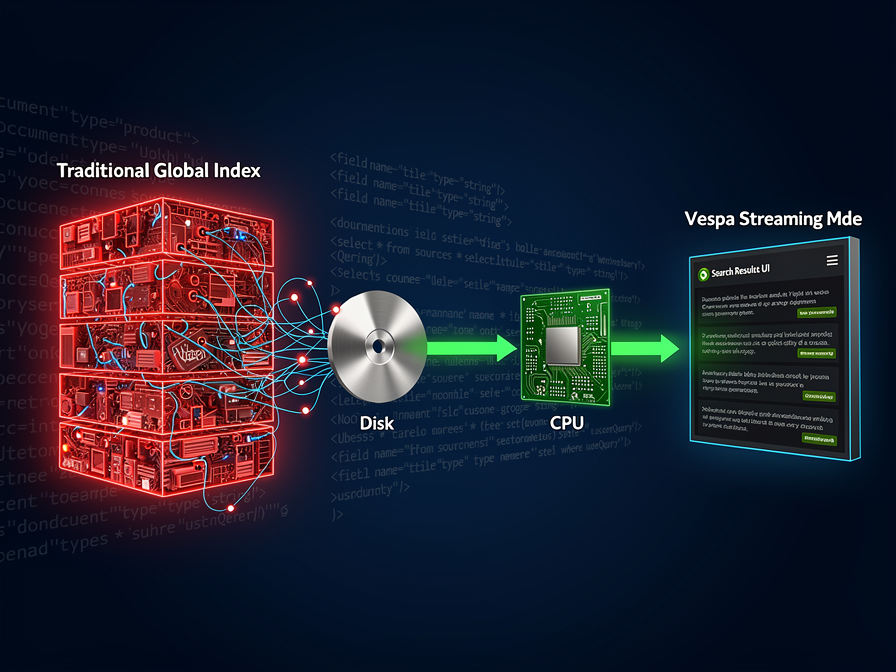

Elasticsearch remains a powerhouse for log analytics and standard full-text search. However, its vector capabilities (k-NN) are essentially "bolt-on" features. Because it’s built on Lucene’s immutable segments, there is a distinct "refresh" lag before data becomes searchable—a bottleneck for truly real-time applications.

Vespa.ai: The Real-Time Specialist

Vespa was built from the ground up for big data serving and real-time inference. Unlike Elasticsearch, Vespa uses mutable data structures. This means when you update a document, it’s searchable instantly. For projects where data freshness is a product requirement (like stock markets or breaking news), Vespa is the clear winner.

Hands-on Experience: Bridging the Gap with AI

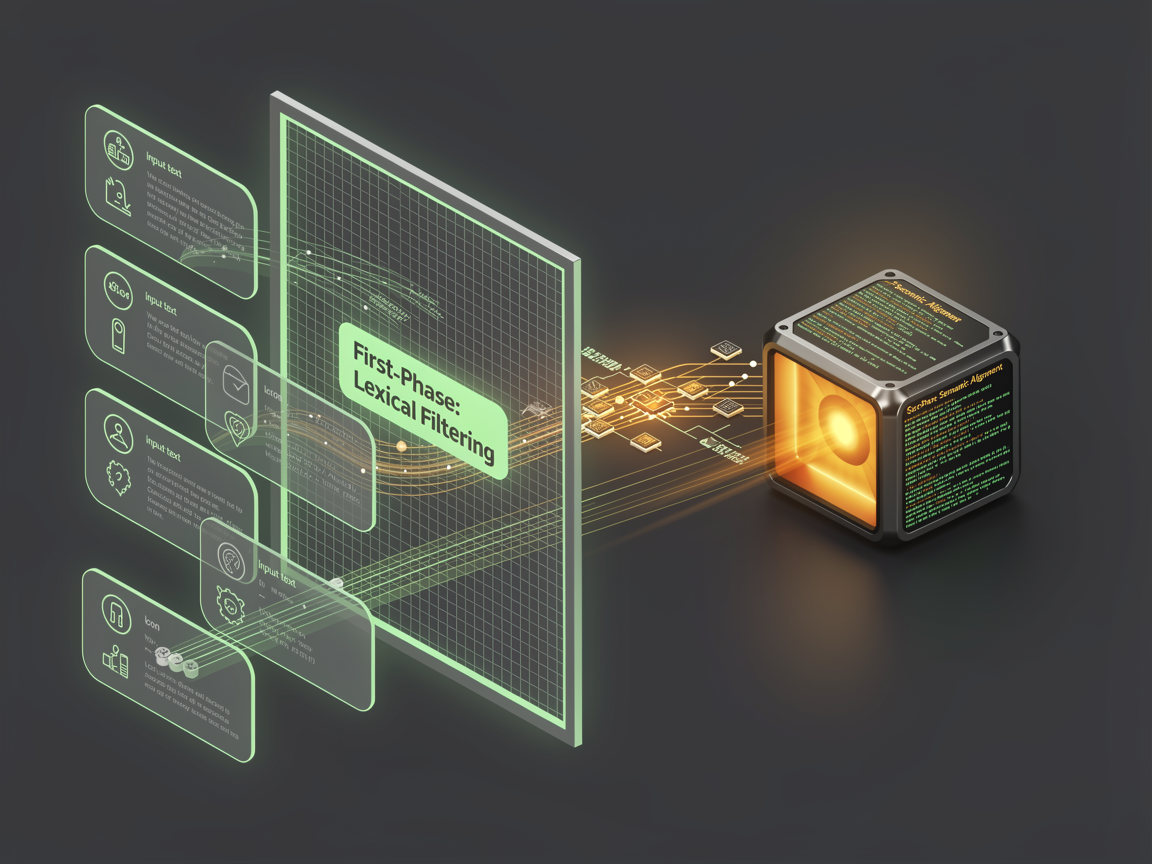

In my recent projects with Vespa, I focused on solving the "YQL Complexity" problem. Vespa’s YQL (Yahoo Query Language) is incredibly powerful for hybrid search (combining vectors + tensors + text), but it has a steep learning curve for non-technical users.

The Project: Natural Language to YQL

I developed a layer that allows users to type simple English—for example: "Show me 3D portfolios created in Gurugram with a minimalist aesthetic"—and automatically converts it into a formal Vespa YQL statement.

How it works:

- Semantic Intent: An LLM parses the user's intent and identifies filters (Location: Gurugram) and semantic concepts (Minimalist aesthetic).

- Vector Conversion: The "minimalist" concept is converted into a vector embedding.

- YQL Generation: The system constructs a hybrid query:

- SQL

select * from sources * where {

targetHits: 10

} nearestNeighbor(embedding, q_embedding) and location contains "Gurugram";

- Instant Results: Vespa processes this hybrid query in sub-100ms, returning results that match both the hard filters and the subjective "vibe" of the search.

Vespa.ai vs. Elasticsearch: A Quick Comparison

| Feature | Elasticsearch | Vespa.ai |

| Primary Use Case | Logs, Analytics, Basic Search | Real-time Search, RAG, Recommendations |

| Vector Support | Plugin-based (k-NN) | Native Tensor Support |

| Data Freshness | Near Real-time (Refresh lag) | True Real-time |

| Scaling | Horizontal | Linear & Automated |

| Ranking | Script-based | Advanced Machine-Learned Ranking |

The Verdict: Which Should You Choose?

Choose Elasticsearch if:

- You are already heavily invested in the ELK stack.

- Your primary use case is log management or internal enterprise search.

- You have moderate vector search needs that don't require complex re-ranking.

Choose Vespa.ai if:

- You are building a Data Growth engine where search quality directly impacts revenue.

- You need to combine vector search with complex business logic (Hybrid Search).

- You want to run AI models (ONNX, XGBoost) directly inside the search engine for ranking.

After moving several projects to Vespa, the performance gains in latency and the flexibility of the tensor framework have made it my go-to for any application that needs to be "smarter" than a basic text match.

No comments yet. Be the first to share your thoughts.