Native Vector Search in Eloquent: Semantic Discovery the Easy Way

Why manage a massive external search cluster when your favorite framework can handle it natively? In 2026, Laravel and pgvector have finally merged to give us "Semantic Search" without the infrastructure headache. See how I’m using native Eloquent methods to replace legacy keyword matching with AI-driven intent—perfect for local marketplaces and dealer directories.

Finding the Needle: Why Native Vector Search in Eloquent is a 2026 Game Changer

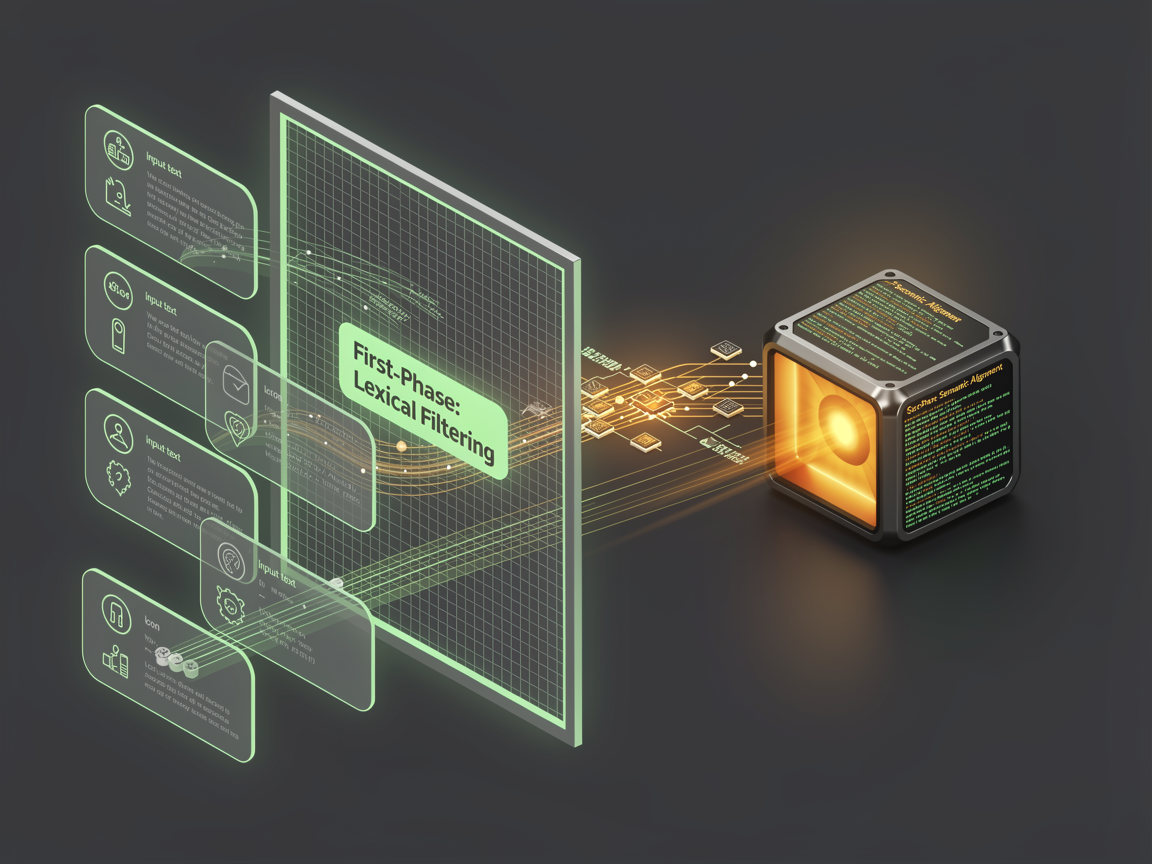

Remember when "search" just meant hitting a database with a LIKE %query% and hoping for the best? Honestly, it was a mess. You’d type "crimson sneakers" and get zero results because the database only knew the word "red." We’ve spent years building complex workarounds, but things have changed.

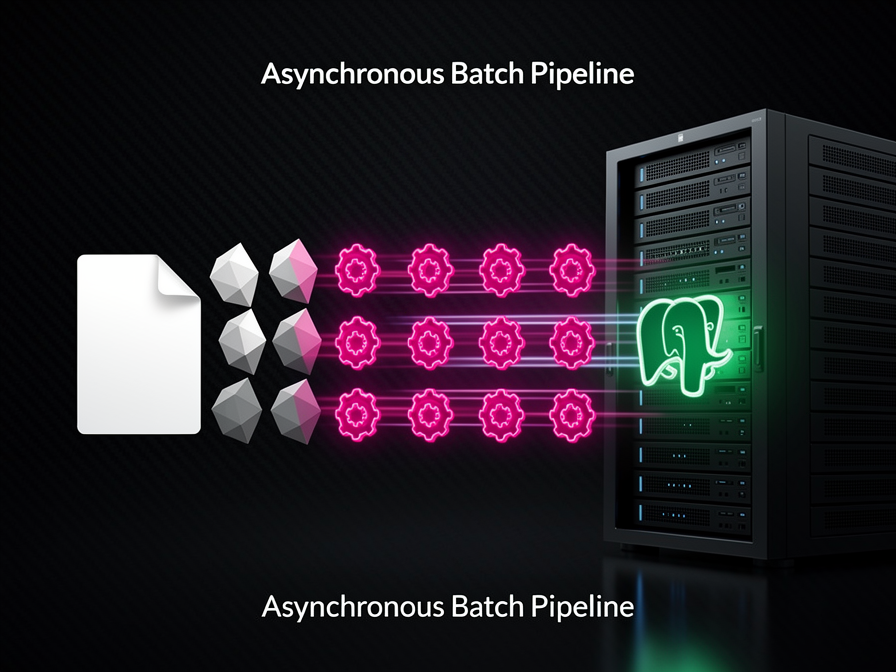

As I’ve been moving deeper into the Data Engineering side of things, I've noticed a shift. We’re moving away from keyword matching and toward semantic discovery. And the best part? If you're running Laravel in 2026, you might not even need a massive external vector database to do it.

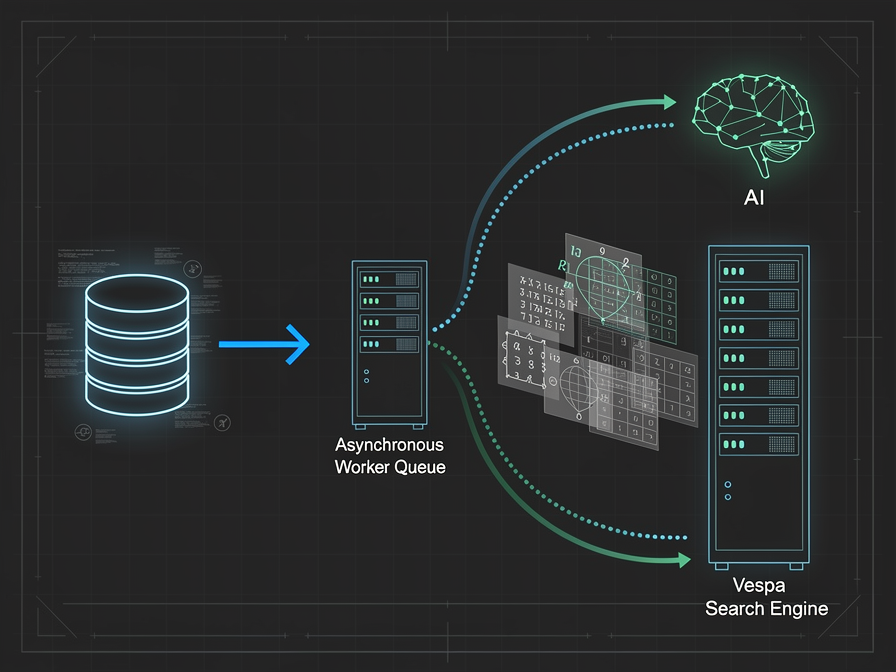

Wait, do I really need more infrastructure?

Here’s the thing. I love a good high-performance setup. I’ve written before about how Vespa.ai vs Elasticsearch is the way to go for real-time search intelligence at a massive scale. But let’s be real—sometimes you’re building a dealer directory or a localized marketplace like Toskie, and you don't want the overhead of managing a whole new cluster just to let people search "vibey cafes."

You know what? For most of us, PostgreSQL with the pgvector extension is more than enough. It’s like having a sports car engine tucked inside your reliable family sedan.

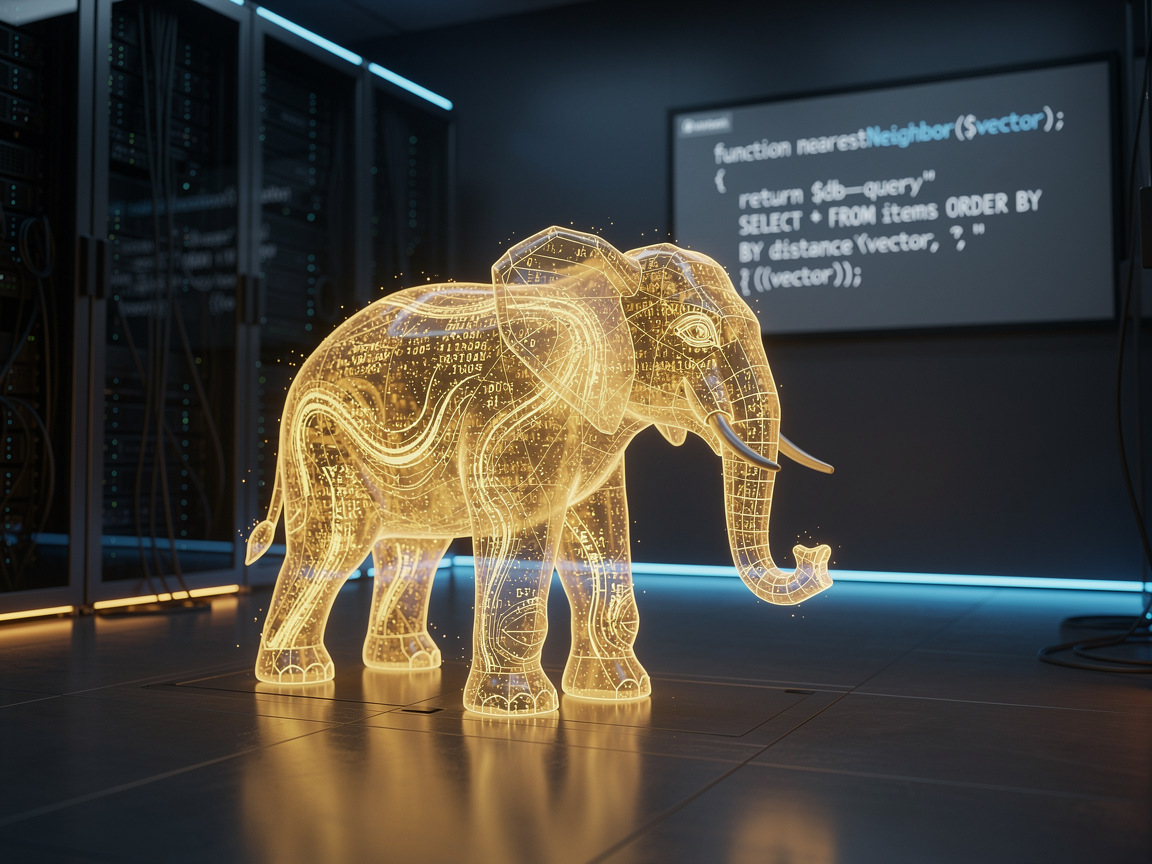

Eloquent finally speaks "Vector"

For the longest time, using vectors in Laravel felt like a hack. You’d be writing raw SQL in the middle of your beautiful models, and it felt... dirty. But with the latest updates, Eloquent handles vector embeddings almost as naturally as it handles strings or integers.

Imagine you have a Product model. Instead of just searching for "minimalist desk," the system converts that phrase into a list of numbers (an embedding) and asks the database, "Hey, what else looks like this?"

$products = Product::query()

->nearestNeighbor('embedding', $userSearchVector)

->limit(5)

->get();

It’s simple. It’s clean. And it’s fast. You’re not just matching letters; you’re matching intent. It makes your app feel like it actually understands the user. Isn't that what we're all aiming for?

The "Aha!" moment for your data

I was working on a project recently where we needed to suggest insurance plans based on vague user descriptions. Usually, that’s a nightmare of "if-else" statements. But by using native vector search, we could just compare the "vibe" of their needs to the features of the plan.

It’s funny, because as a Solution Architect, I used to always reach for the most complex tool first. Now? I’m realizing that if I can keep it within the Laravel ecosystem while maintaining that data integrity, it’s a win for everyone.

Is there a catch?

Of course. There’s always a catch. If you’re pushing millions of updates a second, you’ll eventually hit the limits of what a standard relational DB can do with vectors. That’s when you graduate to the big leagues. But for 90% of the projects we’re building today—those dealership locators or service marketplaces—this "native" approach is the sweet spot.

It saves time, it saves money, and your DevOps person won't want to strangle you for adding another service to the stack.

No comments yet. Be the first to share your thoughts.