Beyond the Dashboard: Engineering a High-Throughput GMB Data Pipeline

Managing a few local listings is easy; orchestrating thousands across a national network is a distributed systems challenge. Here’s how I build resilient, reactive GMB infrastructures.

When you move from managing a handful of local listings to orchestrating thousands across an enterprise network—something I’ve lived through while building systems for dealer directories and hyper-local marketplaces—the standard dashboard becomes a bottleneck.

In my experience, the gap between a "script that works" and an "enterprise-grade infrastructure" is defined by how you handle the chaos of the Google Business Profile (GMB) ecosystem. It’s not just about successful GET requests; it’s about defensive engineering.

The "Leaky Bucket" Strategy for Rate Limits

Google is protective of its resources. If you try to sync 5,000 locations in a simple foreach loop, you’ll hit a 429 Too Many Requests error before the first 100 are done.

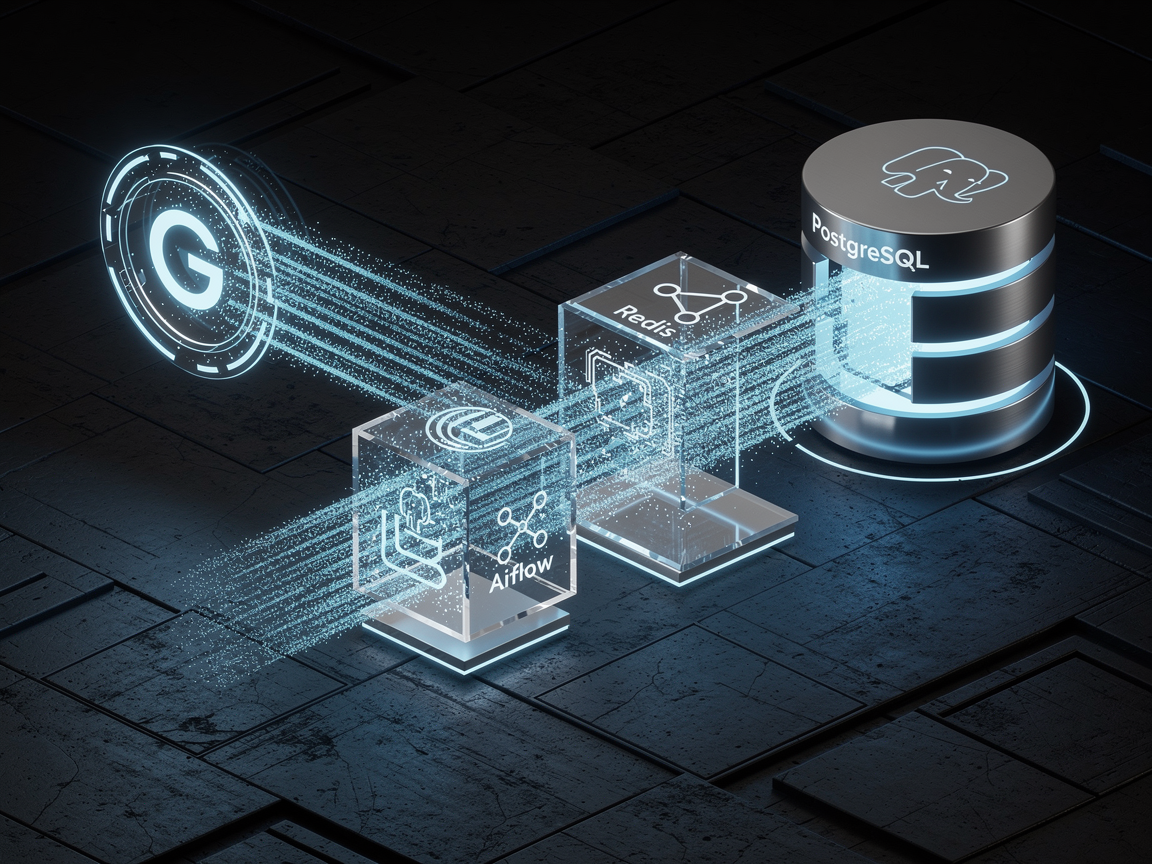

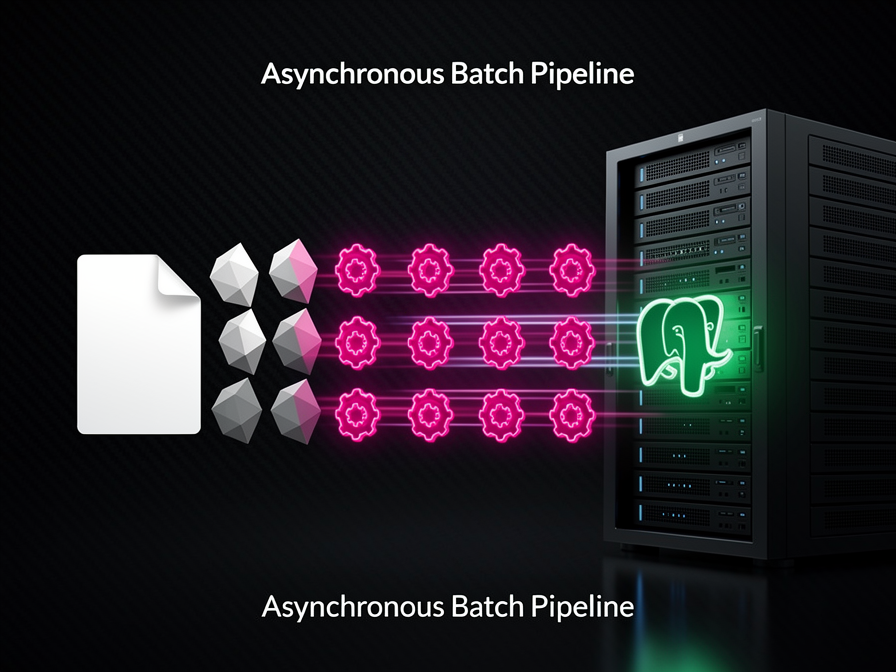

I don't just "loop" requests. I implement a distributed message broker—usually Redis—to act as a buffer. By using a token bucket algorithm, I ensure the outgoing traffic stays within Google’s per-project quotas. This turns a potentially destructive burst of traffic into a steady, manageable stream.

Reactive Architecture via Pub/Sub

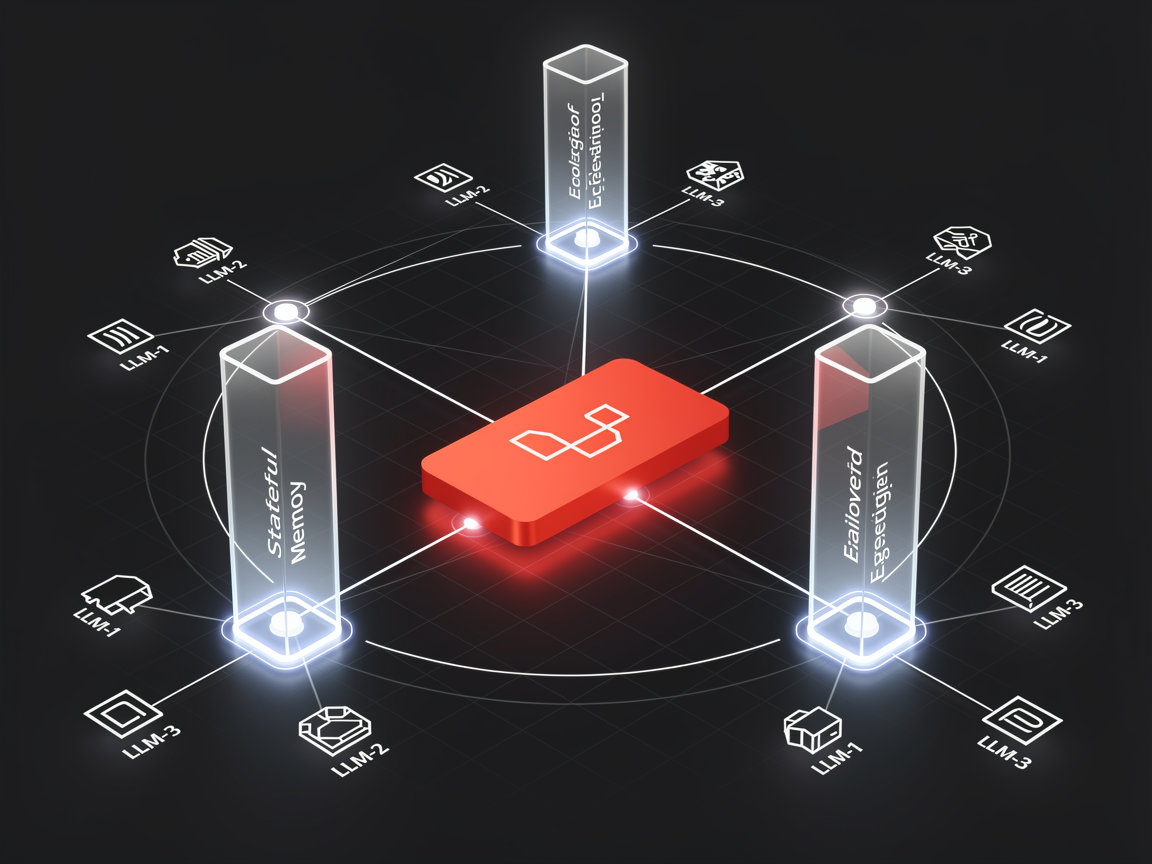

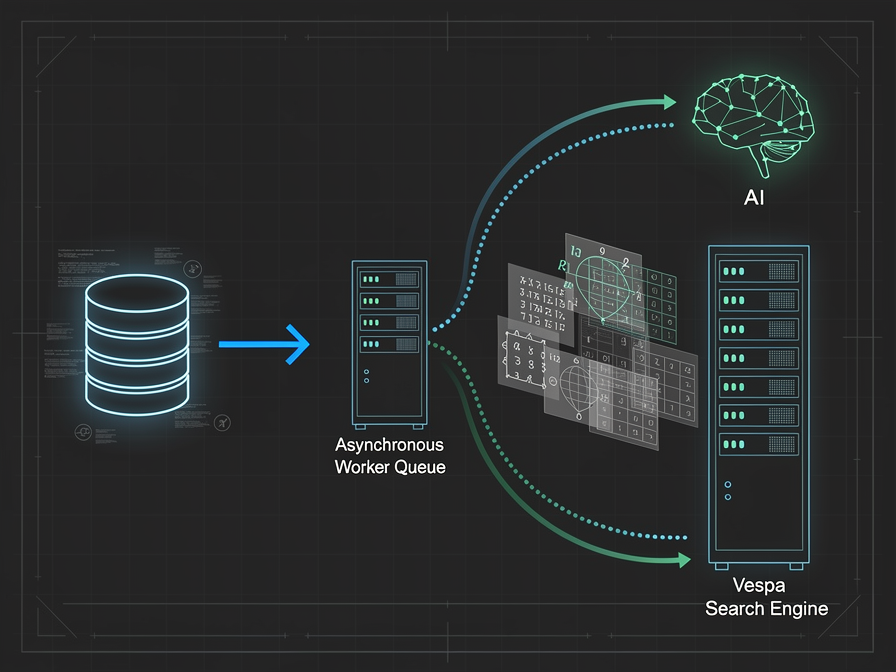

Polling for updates is an amateur move that kills your quota. In the systems I design, I treat GMB updates as events. By leveraging Google Cloud Pub/Sub, the infrastructure becomes reactive.

Whether it's a new review or a change in business hours, a dedicated microservice listens for these events and triggers a background worker. This decoupled approach ensures that your main application database stays fast, even when Google is sending a flood of updates.

The Data Engineering Pivot: From Cache to Pipeline

As I’ve shifted my focus toward Data Engineering, my approach to data storage has evolved. It’s no longer enough to just "cache" GMB data in PostgreSQL (though JSONB is still my go-to for its flexibility).

I now treat these API sinks as the entry point for an ELT pipeline. Using Airflow to orchestrate syncs allows us to move beyond simple display data. We can now run historical trend analysis and location-based performance metrics that a standard API call simply can't provide. This is where the real value lies for large-scale networks like the ones I've built for automotive dealers.

Token Security and Self-Healing Flows

Managing OAuth for hundreds of different accounts is a high-stakes game. I treat refresh tokens like the "crown jewels"—encrypted at rest and stored in a secure vault.

But security shouldn't break UX. I implement a self-healing middleware: when a 401 Unauthorized hits, the system automatically fetches a new access token, retries the request, and completes the operation. The user never sees the hiccup; the system just "works."

Conclusion

Engineering for the GMB API is an exercise in managing constraints. By prioritizing a decoupled architecture and treating your data as a pipeline rather than a static mirror, you build a system that doesn't just function—it scales. Focus on the plumbing today, and your application will be ready for the millions of data points of tomorrow.

No comments yet. Be the first to share your thoughts.